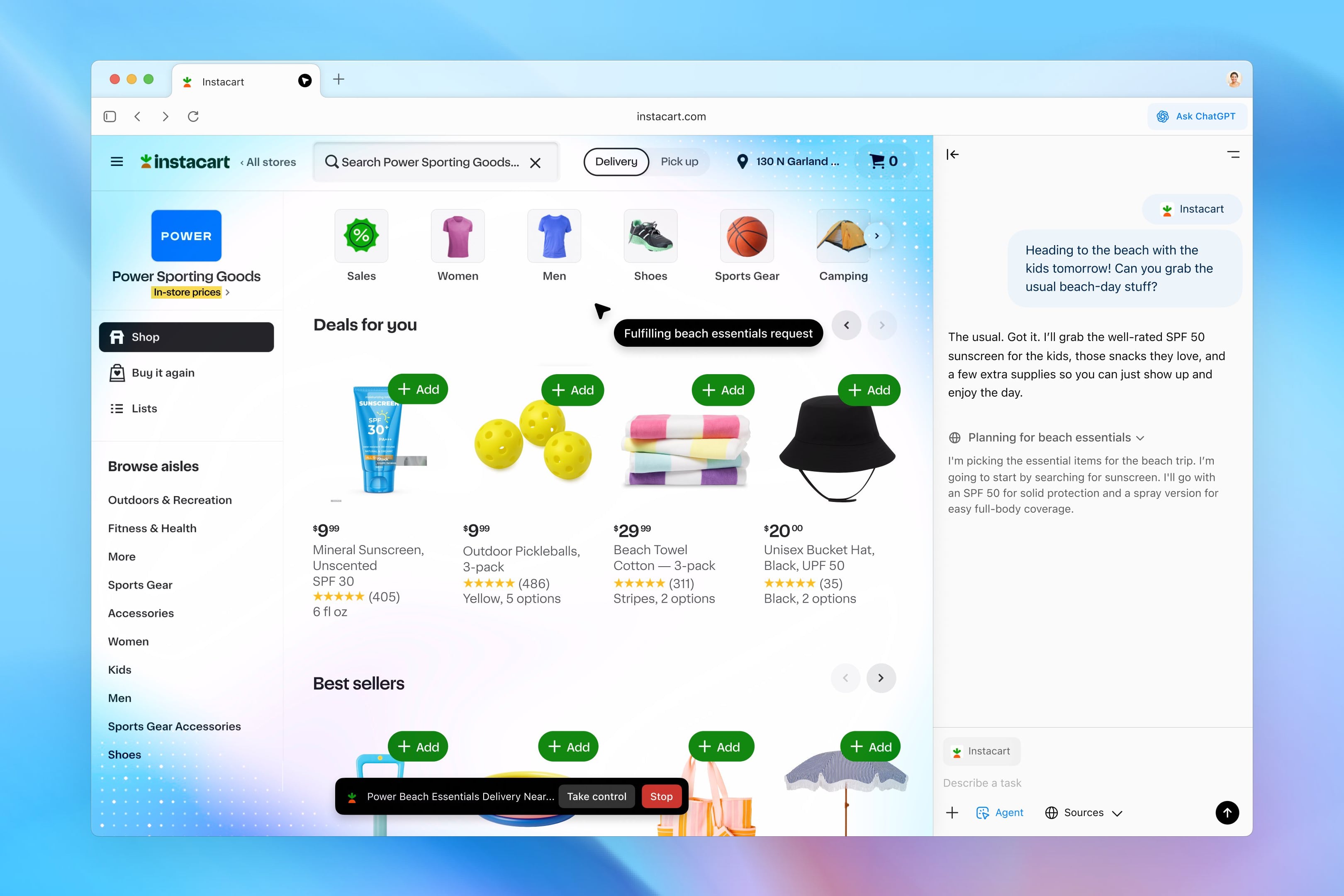

Meta announced that it is working on a series of new child protection features on Instagram, such as a feature consisting of blurring nude images that they send or receive through the app’s direct messages. This is a security measure to combat sextortion, a practice that involves sending intimate images to trick a user into doing something they don’t want.

Upon release this feature It will be activated by default for minor users. (Instagram may know this because it forces users to set a birth date.) In any case, adult users can activate it through the app’s settings; Instagram will encourage this through a notification.

The goal, the company says, is to “protect people from seeing unwanted nudity in their private messages.” Additionally, “protect them from scammers who may send nude images to trick people into sending their own images in exchange,” Meta said in a post.

Alerts when a user receives or sends a nude image

We reiterate that this feature will blur those explicit images that were sent or received through Instagram direct messages. Also, will display a message warning you that the media content contains nudity.

If the image was sent by the user, Meta will activate a pop-up when they click on it, which will alert them that the recipient may have taken a screenshot without the sender’s knowledge. This will also give you the option to delete the image you are sending, but will highlight that the recipient may have already seen it.

On the other hand, the Instagram feature will also have direct access to resources and information so that both minors and adults can protect themselves from practices such as sexual extortion.

Meta will use an algorithm to detect nudity on Instagram

Meta states that They use an algorithm to determine whether a photo is an intimate image.. And that under no circumstances do they have access to chat or images other than those reported.

The new Instagram feature is currently in the testing phase and it is expected that will be released to select users in the coming weeks. It will later be available worldwide. Wall Street Journal.

Meta isn’t the only company that offers little security for its messaging platform. A few years ago, Apple also announced nudity alerts in iMessage. They blur the image and give users the option to not see it, delete it completely, or report it. Like Instagram, Apple also displays a section for “Other ways to get help.” In doing so, the platform will show the user a website with resources and advice not to continue the conversation.

Source: Hiper Textual

I am Garth Carter and I work at Gadget Onus. I have specialized in writing for the Hot News section, focusing on topics that are trending and highly relevant to readers. My passion is to present news stories accurately, in an engaging manner that captures the attention of my audience.