Yandex has released a bilingual version of the Balaboba text generator, which now supports Russian and English, the company said.

Balaboba generates texts using the Yandex YaLM language model, which solves natural language processing tasks. YaLM models are used, in particular, for “Alice”, improving order descriptions in “Services”, generating cards for quick answers in “Search”.

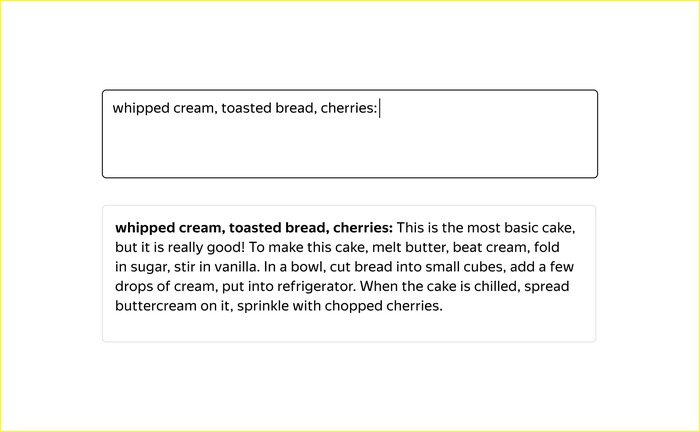

The user just needs to write one or two words in Russian or English and choose one of the styles, after which Balaboba will create a meaningful text on any topic, similar to Internet texts, in which the model studied.

A text generator can write a short story, propose a recipe, instructions or popular wisdom. In accordance with the name of the film “Balaboba”, he will write a plot for it.

_6tAKhqz.png.700x432_q95.jpg)

To take into account the rules of the language and select the appropriate words for Balabobe, parameters built into the model allow them to change depending on whether the word is predicted correctly or incorrectly. The YaLM family of language models can have anywhere from a billion to 100 billion parameters.

Yandex released Balaboba in June 2021. The neural network is trained on the basis of 3 billion parameters. To ensure that the texts were grammatically correct and lexically diverse, YaLM was taught in parts of Wikipedia pages, news articles, books, and public posts by social media users and forums.

Author:

anastasia mariana

Source: RB

I am Bret Jackson, a professional journalist and author for Gadget Onus, where I specialize in writing about the gaming industry. With over 6 years of experience in my field, I have built up an extensive portfolio that ranges from reviews to interviews with top figures within the industry. My work has been featured on various news sites, providing readers with insightful analysis regarding the current state of gaming culture.